Processes and Adaptation of AI Tools for Novice Content Creators

Copyright © 2025 Korean Institute of Broadcast and Media Engineers. All rights reserved.

“This is an Open-Access article distributed under the terms of the Creative Commons BY-NC-ND (http://creativecommons.org/licenses/by-nc-nd/3.0) which permits unrestricted non-commercial use, distribution, and reproduction in any medium, provided the original work is properly cited and not altered.”

Abstract

The consumption of video content on social media platforms has become a dominant trend, encouraging the emergence of numerous video creators. Platforms like YouTube or Instagram incentivize creators with profit-sharing mechanisms, making content creation an attractive career option for young individuals. However, novice Content Creators often face challenges due to the complexities of video production, such as scripting, filming, editing, and post-production processes. This study aims to provide structured processes and applications into the adaptation of AI tools, which can significantly simplify and optimize these processes, reducing frustration and enhancing content quality for novice creators. By practical utilizing AI tools, testing for errors, the usable processes were derived. Furthermore, during the workshop, the processes were tested by participants. Participants requests and needs were collected before the workshop, these requirements were designed in one section of the workshop. After the workshop, all participants were interviewed about whether such processes were helpful to them, received positive feedbacks as a result.

초록

소셜 미디어 플랫폼에서의 비디오 콘텐츠 소비는 지배적인 트렌드가 되어 많은 비디오 크리에이터들의 등장을 촉진하고 있다. 특히 YouTube나 Instagram과 같은 플랫폼은 수익 공유 메커니즘을 통해 크리에이터들에게 인센티브를 제공하며, 이는 젊은 세대에게 콘텐츠 창작을 매력적인 직업 옵션으로 역할을 확대하고 있다. 그러나 초보 콘텐츠 크리에이터들은 스크립트 작성, 촬영, 편집, 후반 작업 등 비디오 제작 과정의 복잡성으로 인해 여전히 창작에 어려움을 겪고 있다. 본 연구는 최근 발전하고 있는 생성형 AI 도구의 활용과 적응을 위해 콘텐츠 특성에 따라 구조화된 창작 프로세스를 제안하고 검증하는 것을 목표로 한다. 이를 위해 초보 크리에이터들이 이러한 AI 도구를 이용하면서 겪는 좌절감을 줄이고 콘텐츠 품질을 향상시키기 위한 창작 과정을 분석하고 창작 프로세서를 제안하였다. 또한 AI 도구를 실용적으로 활용하고 오류 테스트를 거쳐 창작 프로세스를 개선하였다. 제안한 프로세스를 검증하기 위해 소규모의 창작 워크숍을 개최하여 창작 교육을 실시하고, 참가자들의 창작 테스트를 진행하였다. 이를 통해 참가자들로부터의 제안한 AI 창작 프로세스의 유용성과 함께 긍정적인 피드백을 확인하였다.

Keywords:

Generative AI, Creators, Co-creation process, Adaption of AIsⅠ. Introduction

YouTube remains the largest video platform globally, with over 2.49 billion monthly active users as of March 2024, supporting 80+ languages and spanning 100+ countries. Recent data indicates a 50% increase in beginner-focused content and its viewership, reflecting the growing demand for entry-level educational videos [1]. Despite enthusiasm, maintaining consistent updates is challenging due to the labor-intensive nature of traditional video production processes.

This study underscores the importance of developing optimized workflows that incorporate artificial intelligence (AI) tools to alleviate the challenges associated with content creation. Despite the growing availability of such technologies, many content creators continue to rely on traditional production methods, which are often labor-intensive and hinder consistent content updates. Novice creators, in particular, encounter significant barriers when launching new channels, as content production demands a range of technical competencies. Moreover, early-stage creators—such as YouTubers—frequently struggle to expand their audience and are typically unable to afford professional support teams prior to achieving a substantial following. Common challenges include prolonged production cycles, the continuous demand for novel content ideas, maintaining high production quality, limited access to research resources, and inadequate support systems [2]. In light of the recent proliferation of AI technologies, these tools present promising opportunities to mitigate such difficulties and support more efficient and scalable content creation processes.

In this study, we propose a simplified co-creative process utilizing generative AI tools to support content creators. To enhance the reliability of our findings, a triangulation approach was employed by comparing data from pre- and post-workshop interviews, self-assessed production outcomes, and the actual content outputs. An online workshop was conducted for individuals intending to begin creating digital content. Prior to the workshop, interviews (N=4) were conducted to gather the needs and expectations of novice content creators, which were then integrated into the workshop design. Following the workshop, additional interviews (N=4) were carried out, during which participants provided qualitative feedback and submitted self-assessed quality ratings of their content. In summary, this research contributes a streamlined co-creation process with generative AIs that can help content creators improve production efficiency, enhance content quality, and reduce associated costs.

Ⅱ. Related Work

With advances in generative AI, large language models (LLMs) and large-scale text-to-image generation models can now produce high-quality outputs across various modalities such as text and images. These models can handle both unseen and open-domain tasks using only instructions and/or a few-shot examples. With AI assistance, it is possible to enhance the quality of work while saving time. Generative AI supports editing tasks like upscaling visuals, reformatting content, and suggesting titles and subtitles. Its use in video completion tasks suggests that future design workflows could integrate generative AI tools to streamline the creative process for content creators [3].

With the rapid development of generative AI, human-AI co-creation has become a significant trend in the creative industries. ReelFramer, a human-AI collaborative system, is designed to transform print news articles into engaging short videos or “news reels” for social media platforms [4]. The system leverages narrative framing, generative AI, and interactive workflows to help journalists balance information and entertainment, thereby enhancing content efficiency and accessibility. This includes automated narrative generation, AI-assisted script creation, and video editing. While stories can be generated from a single prompt, their quality is still limited. Currently, such AI-generated stories pass 3–10 times fewer creative writing tests than those written by professionals—across dimensions such as fluency, flexibility, originality, and elaboration [5, 6].

Additionally, Haase and Pokutta proposed a framework that divides human-computer co-creation into several levels, ranging from AI as an assistant tool to AI as a co-creator [7]. In this study, that framework is applied to analyze AI’s role in various stages of content production—such as script writing, image generation, music creation, and video editing—to demonstrate how AI enhances rather than replaces human creativity. AI tools significantly reduce production times, allowing creators to focus more on quality. Furthermore, human-AI co-creativity is a crucial issue in the development of generative AI [8, 9]. The synergy between human insight and AI’s capabilities represents a major trend in creative workflows. Some creative tasks can now be fully automated. Generative AI—such as LLMs—demonstrates inherent creativity by synthesizing novel ideas from large datasets. These examples illustrate the potential of generative AI to augment and elevate human creativity, unlocking a new level of competence and creative capacity.

Through recent advances in generative AI and content creation, the co-creative process blends human intuition with AI’s computational power, enabling solutions beyond the reach of humans alone. However, the co-creation process with generative AIs is not yet well studied, particularly given the variability across content types and generative models.

Ⅲ. Content creation process based on generative AI Tools

1. Target contents

To support novice content creators aiming to improve the efficiency and quality of their video production, the practical application of AI tools is essential. According to prior research [2], content creators can be categorized into three types: participatory entertainers (Type 1), knowledge sharers (Type 2), and highlight capturers (Type 3). Type 1 creators draw inspiration primarily from everyday life and often feature themselves as the main subjects in their videos—for example, through dance challenges or role-playing scenarios [3]. Type 3 creators, on the other hand, reprocess and edit previously published video materials, focusing on extracting and compiling highlights from sources such as television shows or films [3].

Both Type 1 and Type 3 rely heavily on video footage—either newly recorded or pre-existing—and involve identifying key moments to highlight in the final product. As such, their production workflows are similar. With the aid of automated video editing tools powered by AI, the task of identifying highlight moments can be significantly streamlined, requiring minimal human intervention and thereby reducing production time considerably [10].

In contrast, Type 2 content does not typically rely on existing videos. Instead, it emphasizes the delivery of new and informative content to viewers, such as scientific explanations, educational tutorials, or commentary [3]. For this type, scripting and storyboarding are crucial to clearly communicate the intended message. Traditionally, creating scripts and storyboards has been a time-consuming process. However, the integration of AI tools—such as generative text models like ChatGPT—has simplified these tasks. AI can rapidly generate structured content, including full video outlines and scripts. Moreover, AI-driven translation tools now enable creators to easily localize their content into multiple languages, offering a level of convenience and scalability that surpasses traditional production workflows.

2. Co-Creation Process with Generative AI tools for Content Creation

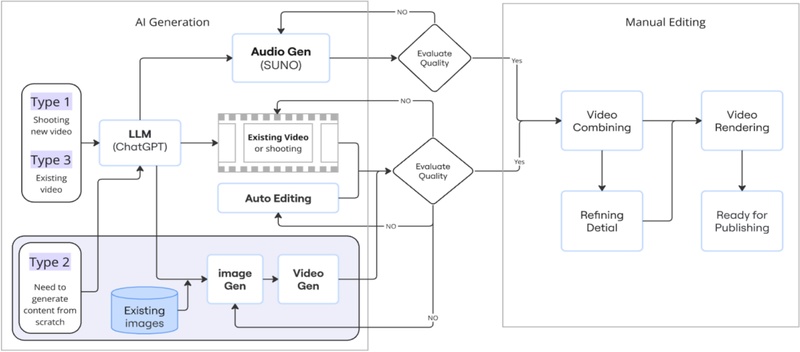

According to collaborative creativity theory, collaboration enhances creativity because innovation is not the result of a single flash of insight but rather emerges through a series of interconnected ideas. Real innovation, therefore, is typically the product of collective input rather than individual effort [12]. In this context, AI can be conceptualized as an additional cognitive agent—or “another brain”—that supports and amplifies human creativity. Based on the distinct characteristics of various content types, we implement tailored Human–AI co-creation workflows that strategically leverage AI tools. The overall process comprises two primary stages: AI-assisted generation and manual refinement.

In the AI generation phase, multimodal assets—such as images, video clips, and audio tracks—are produced using generative AI tools. Users are then prompted to evaluate the intermediate outputs and proceed with manual editing to finalize the content. This co-creative workflow is adapted to suit the three different content types.

Type 1 content begins with original footage recorded by the creator, while Type 3 content utilizes pre-existing, publicly available videos. Both types involve identifying and extracting relevant segments from raw video material, and therefore follow a similar initial workflow. Using AI-powered auto-editing tools, the system automatically detects highlight moments and compiles them into a coherent video composition. This automation substantially reduces production time. If the initial output is unsatisfactory, users can iterate by reapplying the auto-editing process. Once highlight clips are finalized, the process moves into post-production, which involves manual editing and rendering using standard video editing software (see Fig. 1).

Type 2 content follows a more generative process and typically begins from scratch. It starts with the generation of scripts, storyboards, song lyrics, and prompts using a language model such as ChatGPT. These prompts are then used in AI image generators to create visual assets, which are subsequently input into a video generation tool to produce short clips. Simultaneously, a music generator creates melodies based on the generated lyrics. Once all assets—images, videos, and audio—are created, users assess their quality. If any component is deemed unsatisfactory (e.g., irrelevant scenes or mismatched audio), it is refined by returning to the corresponding generation stage. When all components meet the desired quality standards, they are assembled in video editing software to produce the final product, ready for distribution (see Fig. 1).

Ⅳ. Analysis of Co-Creation Process with Generative AIs

1. Analysis approach

To better understand individual experiences with the proposed content creation process, we adopted a triangulation framework for analysis [11]. This methodological approach allows for a comprehensive evaluation of the effectiveness of the process by comparing multiple data sources. Specifically, the study employed three forms of data for triangulation: Questionnaires, which captured participants’ self-rated assessments of content quality, perceived efficiency, and tool usability; Interviews, conducted before and after the workshop, which revealed user motivations, perceived challenges, and changes in perception; Production outputs, which served as tangible evidence of the content produced using AI tools and were evaluated for quality and consistency. This triangulated approach enabled cross-validation of findings and facilitated deeper insights into participants’ creative experiences and process-related challenges.

To further understand participants’ creative processes, needs, and obstacles, semi-structured interviews were conducted both before and after the online workshop. The pre-workshop interviews ensured that participants’ questions could be addressed during the workshop and allowed us to verify whether the proposed co-creation process supported their content production effectively. During the pre-workshop interviews, we introduced the research focus and collected background information, including participants’ previous video production experiences, production motivations, tools previously used, desired outcomes, and the social media platforms they intended to use.

This small-scale workshop was designed as a pilot study to evaluate the effectiveness of the tailored Human–AI co-creation process. Participants were recruited based on the following criteria: (1) individuals planning to launch a new channel on platforms such as YouTube, Instagram, or TikTok; (2) individuals with limited prior video production experience; and (3) individuals currently residing in South Korea. Recruitment was conducted via LINE messenger groups, specifically through a post in the “Taiwanese in Korea” community. Four individuals (three female, one male) met the inclusion criteria. The average age of participants was 32 years, and their prior production experience ranged from six months to three years, with a mean of 1.6 years (see Table 1).

Semi-structured interviews were conducted remotely via Zoom prior to the workshop. Each session lasted approximately 30 minutes and was organized into three sections. The first section introduced the study’s objectives and scope. The second section explored participants’ video production backgrounds, including the tools they had used, their motivations, challenges faced, and current needs. The final section addressed participants’ expectations regarding the content creation process and the intended outcomes. All interviews were conducted in Taiwanese Mandarin with participants’ consent, and each participant planned to operate their channel primarily in Taiwanese Mandarin, with some also intending to provide bilingual subtitles. Participants were compensated 50,000 KRW for each interview session.

Subsequently, the workshop was conducted remotely via Zoom video conferencing and lasted approximately two hours. As a reference, a sample channel introduction video was created for participants to review. This sample video included AI-generated elements such as background music, narration, bilingual subtitles, images, and short video clips—all produced using the AI tools introduced during the workshop. The workshop was organized into the following sections: 1) Generating song lyrics, video scripts, storyboards, and AI prompts using ChatGPT; 2) Generating images; 3) Creating short video clips; 4) Combining these short clips into a longer video using video editing software; 5) Rendering the final video for publication; and 6) Providing supplementary explanations tailored to participants’ individual learning needs, including demonstrations of additional AI tools.

Each step was accompanied by a brief tutorial before participants began working on their own projects. After the workshop, participants submitted their completed works exclusively for research purposes. The entire workshop was conducted in Taiwanese Mandarin and was recorded with participants’ consent.

Finally, follow-up interviews were conducted to assess whether the process was effective during participants’ content production. All participants reported that they were able to quickly create their own videos by following the proposed workflow.

2. Analysis Result

Through the workshop, we observed that participants had diverse objectives in using generative AI tools for content creation, and their workflows reflected the corresponding content types. Participant 1 (P1), who aimed to produce travel and lifestyle videos, typically required original footage. During the workshop, P1 followed the Type 2 process to learn how to generate videos from scratch and incorporated AI-generated narration in the final output. Participant 2 (P2) focused on translating existing videos. By following the Type 3 process, P2 learned to use AI tools to automatically generate bilingual subtitles within seconds. Participant 3 (P3), who intended to create cooking tutorial videos, also followed the Type 3 process and used AI to efficiently extract highlight moments from existing video footage. Participant 4 (P4) wanted to produce pet-related content. Following the Type 1 process, P4 learned to use AI tools to identify highlight moments in existing pet videos. Additionally, P4 experimented with the Type 2 process to generate new pet-related content using AI-based media generation tools.

Post-workshop interviews revealed a range of perspectives on the content creation process. All participants provided generally positive feedback, particularly noting the usability of the tools and the perceived increase in production efficiency. They also expressed satisfaction with the breadth of content covered during the workshop. Two participants specifically appreciated learning about multiple tools in a short period.

However, several limitations were also identified. P1 noted that the AI-generated narration did not sound natural or reflect the accent of native Taiwanese speakers. P2 highlighted that the AI-generated images failed to accurately distinguish between Korean and Taiwanese cultural elements. As a result, the visuals lacked identifiable cultural characteristics, and some included fictitious or nonsensical text. This points to an ongoing challenge in culturally nuanced AI content generation. P3 expressed a preference for the AI music generation tool Suno, although they noted issues with visual consistency—such as the main character’s appearance changing across scenes and instances of anatomical inaccuracies, including six fingers. Interestingly, P3 also remarked that due to the apparent convenience of the tools introduced, they were considering investing more in AI tools in the future. P4 encountered challenges related to script refinement and storyboard adjustments. After multiple iterations with ChatGPT, P4 was eventually able to achieve the desired results. P4 also commented that the AI tools offered a wide range of creative directions, which helped inspire and enhance human creativity.

We also found that participants spent less time creating content with the assistance of generative AI, although the quality of the resulting outputs varied considerably. Participants who marked “M” on their scripts indicated that they needed to modify the content after it was initially generated by ChatGPT. The quality of the generated images and videos was rated across a broad spectrum, reflecting the variability in AI output. For example, Participant 1 (P1) reported satisfactory results from text-to-image generation but was dissatisfied with the quality of the AI-generated video. Conversely, Participant 2 (P2) experienced the opposite: although some of the generated images were unsatisfactory, the resulting video met expectations. The “None” label in the auto-editing section indicated that the participant did not utilize the automated editing feature for their video. Despite differences in content quality, all participants reported that the use of AI tools resulted in a time savings of up to 50% compared to traditional production workflows. Since all the AI tools introduced during the workshop offered free trial versions, participants were able to experiment with multiple platforms and decide which tools to adopt based on the quality of the outputs and their individual needs.

Ⅴ. Conclusion

This research focused on the process and adaptation strategies for novice content creators to enhance their efficiency and content quality through the use of AI tools. The investigation began with experimenting on different types of content production workflows and comparing the resulting outputs. Based on the experimental results, a simplified production process was developed. The effectiveness of this process was then validated through a workshop. To strengthen the reliability of our findings, a triangulation method was employed, comparing interview data, self-rated production outcomes, and actual content outputs.

As this study represents an initial step, several limitations should be acknowledged, and directions for future research are identified. First, the sample consisted solely of Taiwanese novice content creators residing in South Korea, which may limit the generalizability of the findings. Future studies should seek to include creators from more diverse cultural backgrounds to develop a more representative production process. Second, the AI tools introduced during the workshop were limited to free trial versions and may not reflect the full range of available AI technologies. Subsequent research should explore a broader variety of AI tools to enhance applicability. Furthermore, future studies could incorporate structured questionnaires assessing perceived usefulness, cognitive load, and creative confidence to complement the qualitative data. Inviting expert reviewers to evaluate the produced videos using standardized rubrics could also increase the validity of the results.

Overall, this research contributes to a better understanding of AI tool adoption among novice content creators, enabling them to adapt more effectively in the rapidly evolving AI landscape while improving their production efficiency and content quality. Additionally, it adds to the growing body of literature on human-AI collaboration within the creative industries.

Acknowledgments

This work was supported by the Institute of Information & Communications Technology Planning & Evaluation(IITP)-Innovative Human Resource Development for Local Intellectualization program grant funded by the Korea government(MSIT)(IITP-2025-RS-2022-00156287)

References

- How Many People Use YouTube?, https://seo.ai/blog/how-many-people-use-youtube, (Accessed Apr. 24, 2025).

-

J. Kim, H. Kim, “Unlocking Creator-AI Synergy: Challenges, Requirements, and Design Opportunities in AI-Powered Short-Form Video Production,” In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems (CHI '24), Association for Computing Machinery, New York, NY, USA, Article 171, pp. 1–23.

[https://doi.org/10.1145/3613904.364247]

- T. Brown, B. Mann, N. Ryder, M. Subbiah, J. D. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell, et al, “Language models are few-shot learners,” Advances in Neural Information Processing Systems(NIPS), Vol. 33, pp. 1877–1901, 2020.

-

S. Wang, K. Henderson, M. Hansen, S. Menon, D. Li, J. V. Nickerson, T. Long, K. Crowston, L. B. Chilton, “ReelFramer: Human-AI Co-Creation for News-to-Video Translation,” In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems (CHI '24). Association for Computing Machinery, New York, NY, USA, Article 169, pp. 1–20.

[https://doi.org/10.1145/3613904.364286]

-

T. Anderson. S. Niu, “Making AI-Enhanced Videos: Analyzing Generative AI Use Cases in Youtube Content Creation,” arXiv:2503.03134v1, [cs.HC], 2025.

[https://doi.org/10.1145/3706599.3719991]

- S. Colton, and G.A. Wiggins, “Computational creativity: The final frontier?,” In Proceedings of the 20th European Conference on Artificial Intelligence (ECAI'12), IOS Press, NLD, 21–26.

-

J. Haase, S. Pokutta, “Human-AI Co-Creativity: Exploring Synergies Across Levels of Creative Collaboration,” arXiv:2411.12527, [cs.HC], 2024.

[https://doi.org/10.48550/arXiv.2411.12527]

-

A. M. Goloujeh, A. Sullivan. B. Magerko, “Is it AI or Is It me? Understanding Users’ Prompt Journey with Text-to-Image Generative AI Tools,” In Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems (CHI '24), Association for Computing Machinery, New York, NY, USA, Article 183, pp. 1–13.

[https://doi.org/10.1145/3613904.3642861]

- J. Cromwell, J. Harvey, J. Haase, H. Gardner, “Discovering Where ChatGPT Can Create Value for Your Company,” Harvard Business Review, 2023.

-

N. Anantrasirichai, and D. Bull, “Artificial intelligence in the creative industries: a review,” Artificial Intelligence Review, Vol 55, No. 1. pp. 589-656, 2022.

[https://doi.org/10.1007/s10462-021-10039-7]

- R.K. Sawyer, Group Genius: The Creative Power of Collaboration. 2nd ed., Basic Books, 2017.

- Northumbria University, Master of Art in Design (Major in Multimedia Design)

- 현재 : 전남대학교 문화학과 박사과정

- ORCID : https://orcid.org/0009-0001-3773-744X

- 주관심분야 : 미디어콘텐츠, 생성형 AI

- Hiroshima University 로봇공학과 석사

- Osaka University 기계공학과 박사

- 삼성중공업 산업기술연구소 책임연구원

- Osaka University 의학계연구과 교수

- 현재 : 전남대학교 AI융합대학 인공지능학부 교수

- ORCID : https://orcid.org/0000-0002-8135-8252

- 주관심분야 : 헬스케어, 지능로봇, 컴퓨터비전

- 대전대학교 컴퓨터공학과 박사

- (주)디지캡 이사

- 한국공학대학교 게임공학과 겸임교수

- 현재 : 한국콘텐츠진흥원 문화체육관광기술진흥센터 PD

- ORCID : https://orcid.org/0000-0002-0465-3266

- 주관심분야 : 저작권, 정보보호, 신뢰 AI, 콘텐츠 보호

- 광주과학기술원 정보통신공학 석사/박사

- 한국전자기술연구원 VR/AR연구센터 책임연구원

- 문화체육관광부/한국콘텐츠진흥원 문화기술 PD

- 현재 : 전남대학교 문화전문대학원 미디어콘텐츠·컬쳐테크전공 교수

- 현재 : 전남대학교 문화기술연구소장

- ORCID : https://orcid.org/0000-0003-2384-4022

- 주관심분야 : 가상증강현실, HCI, 문화기술